Vet AI vendors

before you sign.

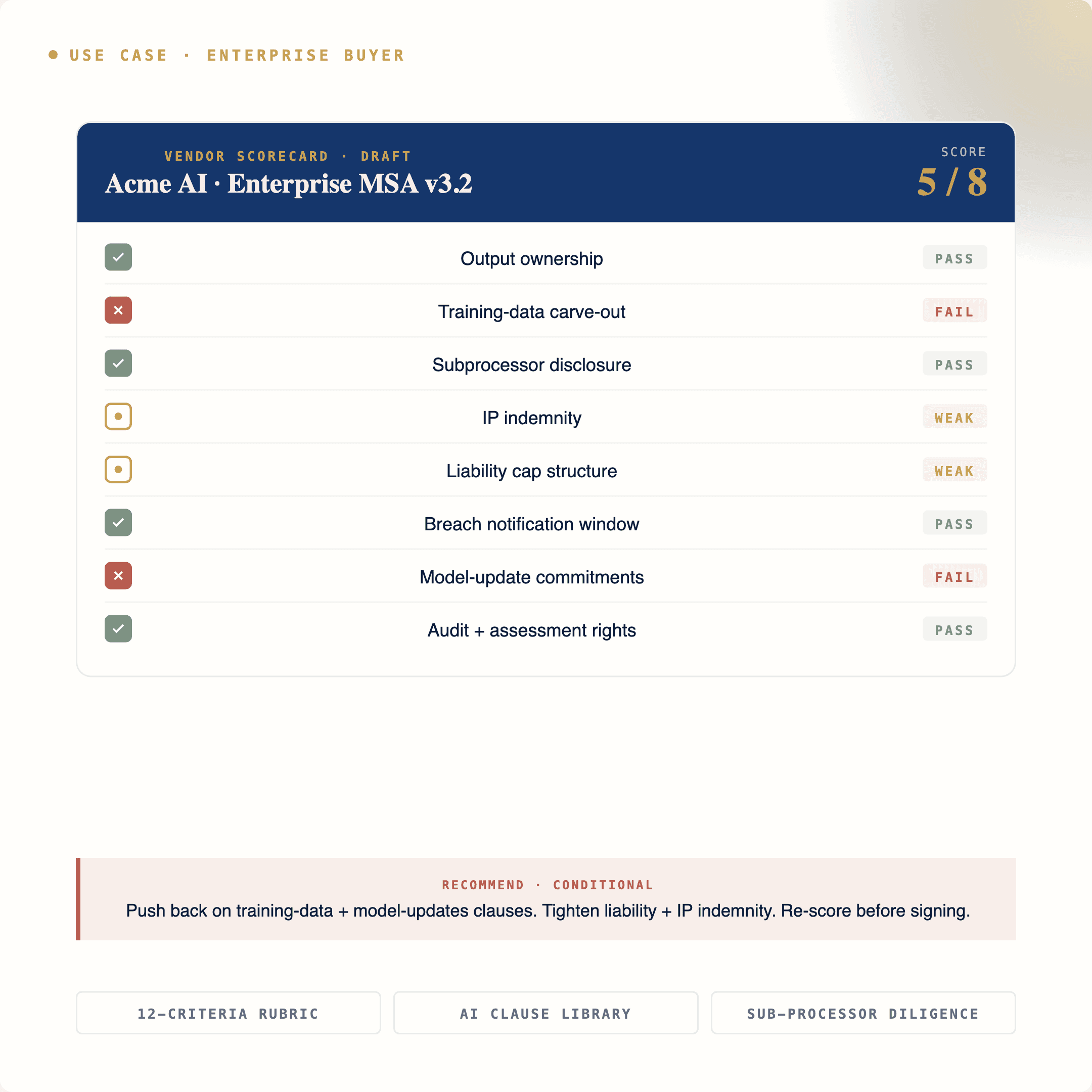

We prepare procurement, legal, and product teams to evaluate AI vendors against a working rubric — the same framework that catches output-ownership traps, training-data gotchas, and the AI-specific indemnities most buyers wave through.

Three things buyers run into the moment they start scoring AI vendors.

AI vendors won’t answer the questions you’re asking

Where does the training data come from? Are you fine-tuning on our prompts? Who owns the outputs? Most AI vendor sales pages don’t answer these — and most procurement questionnaires don’t know to ask.

Procurement is scoring AI risk for the first time

Your scoring sheet was built for SaaS, not AI. The clauses you used to negotiate don’t cover training data, model outputs, prompt confidentiality, or AI-specific indemnities. Buyers without a current rubric get caught flat-footed.

The AI-specific clauses are still negotiable

Vendors will hold the line on output ownership, indemnity carve-outs, and training-data exclusions — but only if you don’t push. Teams that arrive with a working rubric land terms incumbents wave through.

Five steps. End-to-end. Defensible under audit.

The same framework we license to in-house teams. Pre-screen, score, diligence, negotiate, document. Not a checklist. A working capability your team runs every time procurement forwards an AI vendor for review.

Pre-screen the vendor

Public posture check: AI Use Policy, sub-processor list, training-data disclosures, certifications (SOC 2, ISO 42001, ISO 27001). If they can’t produce these, you have your answer.

Score the AI risk

Use a scored rubric across 12 criteria — model provenance, training-data lineage, output rights, prompt confidentiality, fine-tuning policy, indemnity scope, sub-processor list, transfer mechanism, and audit rights.

Diligence the sub-processors

AI vendors stack on other AI vendors. Run the Article 28 sub-processor list against your DPA template. Confirm each downstream AI vendor is contractually covered — not assumed.

Negotiate the AI clauses

Output ownership, training-data carve-outs, AI-specific indemnity, prompt confidentiality, super-caps for AI claims, fine-tuning prohibitions. Use the clause library; don’t draft from scratch.

Document the decision

Approval/rejection record, scoring rubric output, redlined contract, and a renewal trigger. The audit trail is the artifact that proves you ran a defensible review.

The operational kit your team uses on every AI vendor.

- AI vendor scoring sheet · 12-criteria rubricBuilt into your team’s workflow

- AI clause library · 20+ clauses · annotatedOutput rights, training data, prompt confidentiality

- Sub-processor diligence checklistArticle 28-aligned, AI-specific

- Indemnification matrixAI-specific carve-outs, super-caps, exclusions

- Vendor questionnaire templateWhat to actually ask before you sign

- Post-sign monitoring playbookRenewal triggers, sub-processor change tracking

- ›Model provenance & training-data lineage

- ›Output ownership & license scope

- ›Prompt confidentiality & retention

- ›Fine-tuning policy

- ›Sub-processor list (Article 28)

- ›Cross-border transfer mechanism

- ›AI-specific indemnity scope

- ›Liability super-cap

- ›Audit rights & evidence cadence

- ›Certifications (SOC 2, ISO 42001)

- ›Breach response & notification

- ›Termination & data return

Built for the teams owning AI vendor review.

Procurement

Owners of the AI-vendor scoring process. The rubric, the questionnaire, the rejection record — built into the procurement workflow.

In-house counsel

Legal review of AI vendor contracts. Output ownership, training-data clauses, indemnity, sub-processor diligence — without redrafting from scratch every time.

Product & engineering

Leaders shipping AI features who need to evaluate the AI vendors their product depends on. Same framework, role-tailored entry point.

Security & risk

AI-specific risk scoring layered on top of standard SaaS questionnaires. Sub-processor monitoring, certifications, breach response alignment.

The AI-policy checkbox stops being scary.

Procurement reviews start clearing on the first pass. AI clauses get negotiated, not waved through. The team has the same answer in every deal.

“Before the program, AI vendors were a coin flip. After, our procurement team has a working scorecard and our legal team has the clause library to back the pushback. Last quarter we negotiated four AI-specific indemnity carve-outs we would have signed away.”

License the corporate training program.

The vendor review framework, the scoring rubric, the AI clause library, and the implementation support to make them operational across procurement, legal, product, and security — all delivered through The SaaS Law Clinic corporate training program.

Stop signing AI vendors blind.

Bring the vendor review framework in-house. We’ll customize the rubric, train your team, and stay close for the first 90 days while it lands in your workflow.